Appearance

Architecture Overview

Telovix consists of two deployed components, the Console and the Sensor, connected over mutual TLS. The Console runs on operator infrastructure (self-hosted) or Telovix infrastructure (Cloud). Sensors run on each protected node. No component communicates with any third-party service during normal operation.

Component overview

| Component | Role | Summary |

|---|---|---|

| Telovix Console | Control plane | Serves the operator web UI and the sensor control-plane endpoint on a single TLS listener. Manages sensor enrollment and trust, stores fleet state, applies policy and pack assignments, records alerts and investigations, and runs scheduled background work. |

| Telovix Sensor | Node runtime | Runs on each protected node as a single self-contained binary. Collects runtime activity via an embedded eBPF engine, maintains an outbound mTLS connection to the Console, sends a heartbeat every 15 seconds, streams low-latency events over a persistent WebSocket, verifies policy signatures locally, and spools events to disk when the Console is unreachable. |

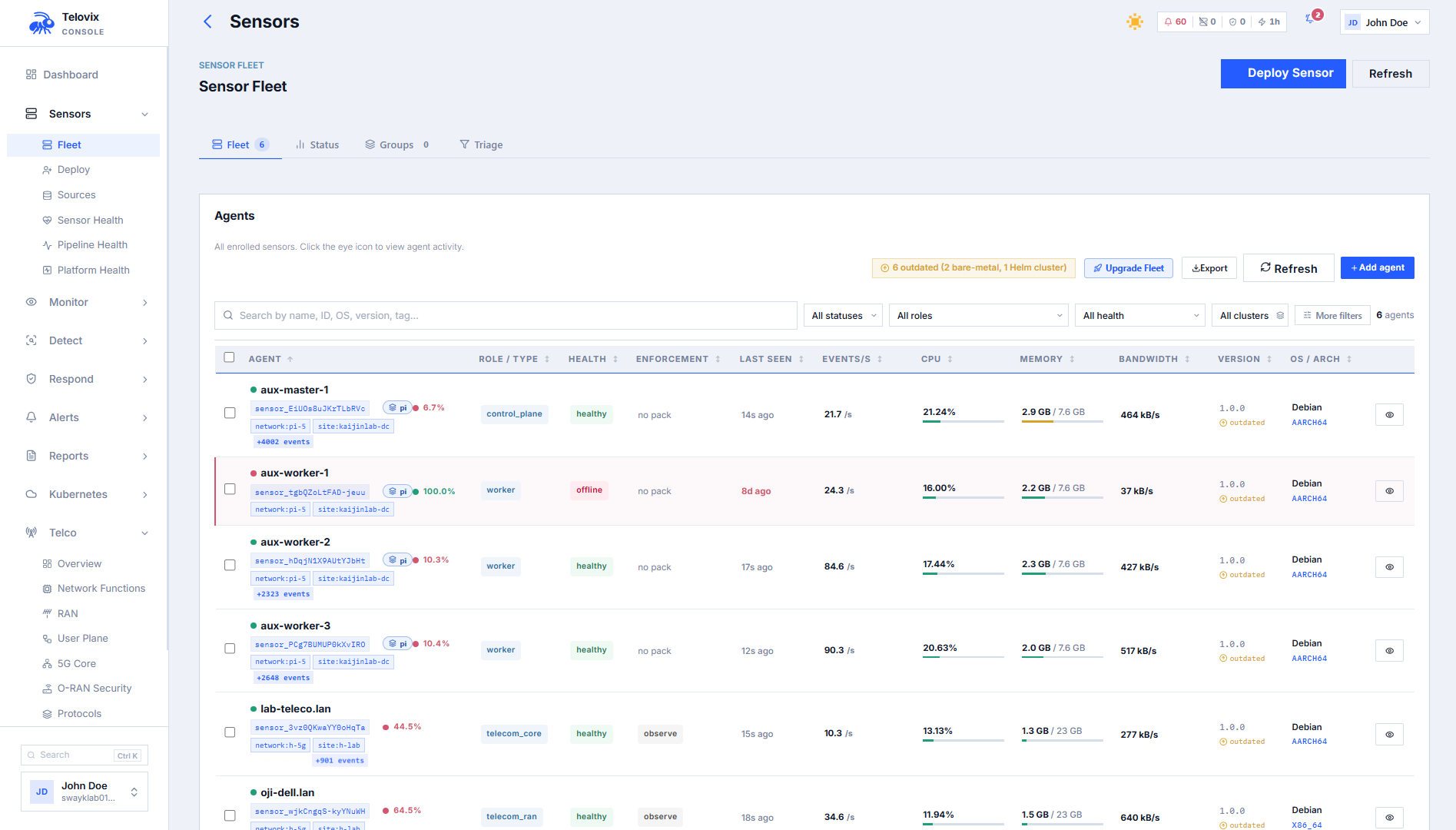

Platform verticals

The Console supports two verticals, selected during the setup wizard:

| Value | Behavior |

|---|---|

standard | Core eBPF security only. Default. |

telecom | Adds 5G Core and O-RAN NF monitoring, NGAP KPI tracking, and the Telco section in the Console UI. |

The Sensor must be deployed with the matching flavor (standard or telecom) to produce the data that the Console's telecom views expect. The flavor is selected at install time via the Deploy Sensor wizard in the Console.

Network topology

Control plane + sensorsStorageScale tier (optional)License only

Sensor to Console: mTLS on :15483, outbound from sensor Sensor to Console: persistent WebSocket stream (500 ms tick) Redpanda path: Scale tier only, activated by TELOVIX_CONSOLE_REDPANDA_URL Portal to Console: license import only, no runtime link

All network paths are outbound from the Sensor to the Console. Sensors are never contacted inbound by the Console. The Console listens on a single TLS port (default 15483 (Telovix self-hosted default)) that accepts both browser HTTPS and sensor mTLS on the same listener. The TLS configuration uses allow_unauthenticated so the Console can distinguish browser clients (no client certificate) from sensor clients (client certificate issued at enrollment) on the same port.

Sensor - Console :15483 outbound mTLS, heartbeat and WebSocket

Browser - Console :15483 HTTPS, session cookie auth

Console - PostgreSQL internal, operational state

Console - ClickHouse internal, runtime event analytics

Console - Redpanda :9092 internal, Scale tier only, configured during setup

Console - Redis optional, shared rate-limit state for multi-replica deploymentsIn Telovix self-hosted deployments, all four services (Console, PostgreSQL, ClickHouse, and optionally Redpanda) run inside the customer environment. In Telovix Cloud deployments, Telovix provisions and operates all infrastructure.

Storage layers

The Console uses three storage tiers with distinct purposes:

| Tier | Required | Default port | Purpose |

|---|---|---|---|

| PostgreSQL | Yes | 5432 | Transactional operational state: sensors, users, teams, policies, alerts, groups, pack assignments, compliance snapshots, investigations, audit records, license state. Raw events are never stored here. |

| ClickHouse | Yes | 8123 | Append-only high-volume analytics: eBPF runtime events, anomaly scores, process baselines, NGAP KPI history, energy metrics, and telco trend data. Default retention: 90 days. |

| Redpanda | No | 9092 | Kafka-compatible event queue. Recommended above approximately 1,500 nodes. When active, the Console produces events to the telovix.runtime_events topic and ClickHouse consumes them via its native Kafka table engine. When inactive (the default), events are inserted directly into ClickHouse per heartbeat. |

PostgreSQL is the authoritative source for all fleet control state. ClickHouse is the sole runtime event store. ClickHouse schema migrations are applied automatically at startup from versioned files in migrations-clickhouse/. Events are partitioned by month and expire after the analytics retention period (default: 90 days, configured in Console Settings).

Redpanda is activated by providing a broker URL during setup. Events are keyed by sensor_id so all events from a given sensor land on the same partition, enabling ordered replay per sensor. The producer uses idempotent delivery by default.

Sensors keep their own local state on disk under the sensor state directory (default /var/lib/telovix-sensor):

| Path | Purpose |

|---|---|

client.key.pem | Active mTLS private key (mode 600, never leaves node) |

client.cert.pem | Active mTLS client certificate |

client.prev.key.pem | Previous mTLS private key (kept during renewal overlap window) |

client.prev.cert.pem | Previous mTLS client certificate (kept during renewal overlap window) |

console-ca.cert.pem | Console CA trust anchor |

policy-signing.pub | Ed25519 public key for policy pack signature verification |

sensor-state.json | Enrollment state: sensor ID, trust state, cert expiry, renewal status |

assigned-pack.json | Current policy pack ID, version, enforcement state, namespace scope |

compiled-policies/ | Active TracingPolicy YAML files deployed by the Console |

events.jsonl | On-disk event spool (written when heartbeat fails, replayed on reconnect) |

engine/v1.7.0/ | Extracted eBPF engine binaries and BPF object files |

Console startup sequence

On every startup the Console executes this sequence before accepting requests:

- Load configuration from the Console config file written by the setup wizard.

- Load or generate the policy-signing Ed25519 key material.

- Initialize the PostgreSQL connection pool and run schema migrations automatically.

- Load the embedded license verification trust anchor.

- Initialize the ClickHouse analytics client, the rate limiter, and the event sink (direct ClickHouse or Redpanda, depending on whether a Redpanda broker was configured during setup).

- Build the operator and sensor routers and merge them into one combined application.

- Validate or auto-generate the sensor TLS assets.

- In Telovix Cloud mode, run the managed bootstrap: import the license bundle and create the admin account from provisioning data if not already present. Both steps are idempotent.

- When background tasks are enabled and first-run setup is complete, start the following background loops: alert notification delivery, compliance report scheduling, daily fleet digest, fleet correlation, saved search notifications, SIEM forwarding, Parquet/S3 export, federation export, ClickHouse health monitoring, SBOM scan queue, and event retention cleanup.

- Bind the single TLS listener on the configured port (default

15484, but typically mapped to15483in production) and begin serving.

Background tasks are deferred until after the first-run setup wizard completes and the process restarts. In multi-replica deployments, only one designated instance should run the scheduled loops.

Rate limiting defaults: 1000 req/min for authenticated admin and operator traffic, 300 req/min for analysts, 200 req/min for unauthenticated traffic. With Redis, limits are shared across replicas; without Redis, each instance tracks independently.

Sensor internals

The Sensor is a single self-contained Rust binary (~89 MB) that embeds the eBPF engine as a compressed tarball. On first run it extracts the engine to its state directory under engine/v1.7.0/ and then enrolls with the Console.

On startup the Sensor checks for a PREEMPT_RT kernel by reading /proc/version. When a real-time kernel is detected, syscall-level kprobes that use BPF_MODIFY_RETURN are disabled because the BPF verifier rejects them on RT kernels. All other collection continues normally.

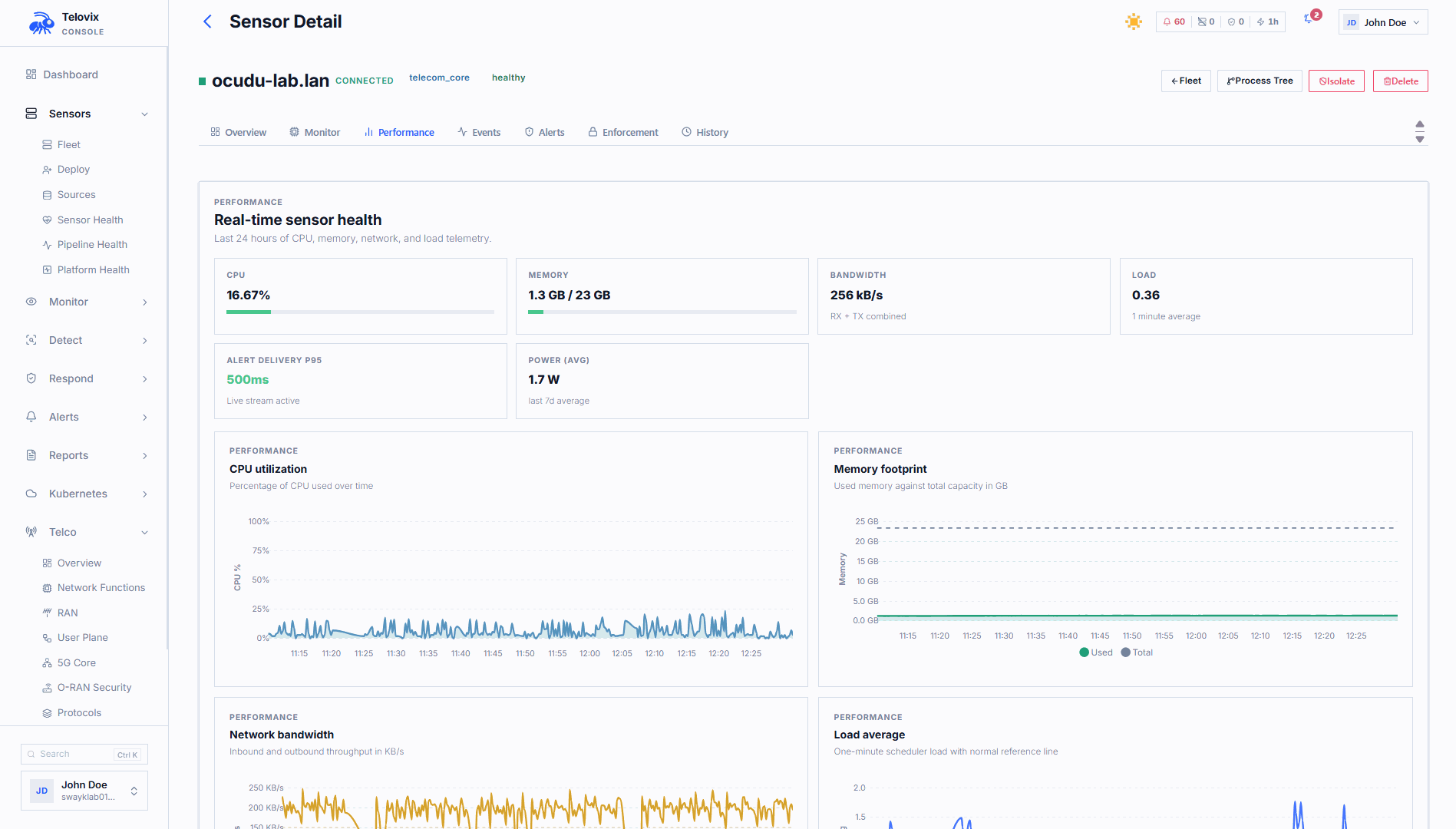

Steady-state operation runs two concurrent loops:

Heartbeat loop (every 15 seconds)

- Check the engine subprocess. Restart it if it has crashed.

- Read new events from the engine JSONL export file, advance the read cursor.

- Drain up to

100spooled events fromevents.jsonlif the previous heartbeat failed. - POST to the heartbeat endpoint over mTLS. The payload carries: recent events, engine state, trust state, active TCP connections, listening services, active flows, resource metrics, system information, event pipeline metrics, and all telecom-specific reports if the telecom flavor is active.

- On a successful response, apply any pack assignment, enforcement policy, or custom policy changes returned by the Console.

- On failure, append the unsent batch to

events.jsonl(on-disk spool, capped at100,000events total; oldest events are discarded when full).

Policy packs received in heartbeat responses are verified against the Ed25519 policy-signing.pub key before any TracingPolicy YAML is written to disk or applied to the engine. Policies with invalid or missing signatures are rejected.

Split-plane endpoints

The Console also accepts two purpose-built endpoints that decouple health, events, and inventory into separate flows:

| Endpoint | Purpose | Body limit |

|---|---|---|

POST /sensors/events | Event batch ingestion only. Fire-and-forget with sequence number ACK. Accepts Content-Encoding: zstd. | 4 MB |

POST /sensors/inventory | Process list, connections, Kubernetes state, and telecom snapshots. Delta-capable via is_full_snapshot flag. | 2 MB |

These endpoints use the same mTLS authentication as the heartbeat. The existing heartbeat endpoint remains fully supported and is the default path for all currently deployed sensors.

WebSocket stream loop (every 500 ms)

The Sensor maintains a persistent WebSocket connection over mTLS. This connection forwards low-latency events to the Console without waiting for the next heartbeat and receives server-to-sensor refresh signals such as immediate policy pushes. The Console sends a JSON ping every 10 seconds over this connection. Any sensor with an active WebSocket connection is treated as healthy by the Console, bypassing the 90-second staleness window entirely. On disconnect, the sensor reconnects with exponential backoff from 1 second up to a cap of 30 seconds.

mTLS enrollment flow

During enrollment the Sensor generates its private key locally and sends only the CSR and the bootstrap token to the Console. The private key never leaves the node.

The enrollment token has a TTL of 15 minutes (configurable in Console Settings). The Console stores only the SHA-256 hash of the token. The token is consumed on first use and cannot be reused. Re-enrollment uses a separate re-enrollment token generated from the sensor's existing record, so a new sensor identity is not created.

Certificate lifecycle

Sensor client certificates default to a TTL of 720 hours (30 days). The Console includes renewal state in each heartbeat response when the certificate is approaching expiry.

| Trust health state | Condition |

|---|---|

healthy | Certificate is valid and not within the renewal window |

renewal_recommended | Within 72 hours (3 days) of expiry |

renewal_due | Within 24 hours of expiry, already expired, or a recent trust error in the last 15 minutes requires immediate attention. |

degraded | Trust metadata or recent connection errors indicate the control path is not fully healthy. |

revoked | The Console has revoked this sensor's identity. All future mTLS requests are blocked immediately. |

Each renewal generates a fresh key pair and a new CSR. The private key is never reused across renewals. The old certificate is kept as client.prev.cert.pem and remains accepted for a 24-hour overlap window so connectivity is not interrupted during the handoff. After the overlap window, the previous certificate is no longer accepted.

Revocation is immediate. The sensor record retains the revoked status for audit and lifecycle tracking.

Event delivery pipeline

This sequence describes how a kernel event on a protected node becomes a visible record in the Console:

- The eBPF engine inside the Sensor attaches kprobes and BPF LSM hooks in the kernel and writes raw events as JSONL to the engine export file.

- The Sensor reads new events from the JSONL file, normalizes them to a common event schema, enriches each event with full process ancestry, workload context (container ID, Kubernetes namespace, workload type and name), and network namespace ID.

- The Sensor correlates DNS lookups with TCP connections, aggregates fork and exec bursts, scores each event against per-binary behavioral baselines (anomaly scoring), and for the telecom flavor, attaches protocol and NF role context.

- Every

500ms, if the WebSocket connection is up, low-latency events are forwarded on that path. - Every

15seconds, the Sensor posts a heartbeat to the Console over mTLS, carrying the enriched event batch alongside control-plane state. - If the heartbeat fails, the event batch is appended to the on-disk spool. The spool holds up to

100,000events. On the next successful heartbeat, up to100spooled events are prepended to the payload, draining the backlog gradually. - The Console authenticates the client certificate, records operational state changes in PostgreSQL, and publishes events to the active event sink.

- If Redpanda is active, the Console produces each event as a JSON message to the

telovix.runtime_eventstopic. ClickHouse consumes it via the native Kafka table engine and inserts it into theruntime_eventstable. If Redpanda is not configured, the Console inserts events directly into ClickHouse via HTTP with zstd compression (Content-Encoding: zstd), reducing typical batch sizes from ~150 KB to ~8 KB at the wire level. - The Console applies inventory delta suppression server-side: process lists, TCP connections, listening services, container images, and Kubernetes inventory are only written to PostgreSQL when a hash of the incoming data differs from the previously stored value. Unchanged inventory is skipped, eliminating redundant writes on every heartbeat cycle.

Telovix self-hosted vs Telovix Cloud

| Aspect | Telovix self-hosted | Telovix Cloud |

|---|---|---|

| Console operated by | Customer | Telovix |

| PostgreSQL and ClickHouse | Customer-managed | Telovix-managed |

| Data residency | Customer infrastructure | Telovix cloud infrastructure |

| License delivery | Download from Portal, import via setup wizard | Auto-injected at provisioning time |

| First-run setup | Console runs setup wizard (database config, license import, admin creation) | Console bootstraps automatically; no wizard shown |

| Sensor connect URL | https://<console-host>:15483 (Telovix self-hosted default) | Telovix-assigned URL, typically served on port 443 (Telovix Cloud) |

| Console upgrades | Customer manages binary updates | Telovix manages the full stack |

| Sensor deployment | Customer deploys Sensors on their own nodes | Customer deploys Sensors on their own nodes |

In both models, Sensors are always deployed by the customer on their own nodes. Event data flows from the sensor to whichever Console endpoint the sensor is configured with.

Federation

Federation supports deployments where a single Console is not the right boundary, such as environments with regional data sovereignty requirements or very large fleets.

The federation role is configured in Console Settings:

| Role | Behavior |

|---|---|

standalone | Default. No cross-Console data sharing. |

regional | Manages local sensors and pushes aggregated data (sensor summaries, event aggregates, compliance snapshots) to a central Console every 300 seconds. |

central | Receives ingest from regional Consoles and provides unified cross-region visibility. |

The region identifier and human-readable label (such as emea-rack-7) are set in Console Settings.